On this page I want to summarize some of the advice I usually give to students who want to get started on a thesis project which involves qualitative empirical research. I will focus here more on the technical/logistical aspects of how to manage your data analysis, specifically the coding process, and will largely disregard the broader methodological questions (see this blog post for a good reader on how to do high-quality qualitative research).

What software to use?

To analyze comprehensive data sets and run certain analyses (e.g. automatic coding, code co-occurence), I highly recommend using a qualitative data analyis software (typically abbreviated as QDA). There are a number of different options on the market. Among the most prominent ones are Atlas.ti, NViVo, Dedoose, and MaxQDA. If you want to learn more about those different options and how they compare, there are several good overview charts offered by fairly independent entities like university libraries (see e.g. this chart by Berkley or this resource page by George Mason University). In what follows, I will focus on MaxQDA as the software that I am most familiar and satisfied with.

MaxQDA: Licenses

MaxQDA is a fairly comprehensive software package for qualitative data analysis. While some universities offer licenses to their students, most universities don’t yet. On the MaxQDA website there are different license types listed. As a student, you should be able to get a student license for under 50 EUR. Tipp: check with your supervisor/department if there is a chance to get the costs reimbursed if the university does not offer a QDA license.

MaxQDA: Video Tutorials

There are some excellent video tutorials that will bring you up to speed. I recommend the following four clips.

MaxQDA: Guidebooks

MaxQDA also offers a couple of helpful resource books that will guide you through the process of analyzing your data. I want to highlight three resources specifically (adopted from the MaxQDA guides website):

Qualitative interviews are a very popular data collection method for which the topics of conversation are usually determined in advance and set down in an interview guide. The focused analysis method presented in this textbook provides detailed recommendations on how to analyze interview data in a systematic and methodically controlled manner. The practical procedure for focused interview analysis using the MAXQDA software package is described in six easy-to-follow steps.

- Prepare, organize, and explore your data

- Develop categories for your analysis

- Code your interviews (“basic coding”)

- Develop your category system further and the second coding cycle (“fine coding”)

- Analysis options after coding

- Write the research report and document the analysis process

This guide provides an overview for using MAXQDA with focus groups, and how it can support the collection, analysis, and reporting of focus group data:

- Preparing and importing focus group data

- Creating and importing speaker variables

- Exploring the focus group data

- Focus group coding

- What comes after coding?

- Tips and tricks

Real-world examples from healthcare illustrate the explanations. A checklist for analysis of focus groups with MAXQDA as well as a checklist for conducting focus groups support the realization of your projects.

This guide describes a procedure for analyzing responses to open survey questions with MAXQDA. The procedure does not consider the open-ended questions in isolation from the other data collected, but rather interlinks this qualitative data with the standardized, quantitative data in the sense of a mixed methods approach. The procedure consists of three steps:

- within-case and cross-case exploration

- coding the answers

- category-based analysis

The procedure combines qualitative and quantitative data as well as word-based and category-based procedures and is demonstrated by an example of a mixed methods evaluation of a university course with an online survey. Following this approach, the information on an individual case is always accessible and can be included in a cross-case analysis.

Transcription rules

Interview data needs to be transcribed before it can be made subject to data analysis in MaxQDA. There are different approaches to how spoken language can be converted into written words. Some transcription systems “smoothen” spoken language in the process of converting it into written words by erasing pauses, correcting grammar, and making other modifications in order to produce a conceptually-written text. These are generally referred to as “non-verbatim” or “denaturalized” transcription systems. Other transcription systems aim to provide very nuanced written accounts of spoken language by using several different markers to stay as close as possible to the actual language used (“verbatim” or “naturalized” transcription). The choice of the transcription system should be closely tied to the type of analysis used (if you are not going to analyze the tone of the voice, interruptions, pauses etc., there is no point of transcribing them).

This short chapter by Morey Hawkins (2018) provides a quick introduction to the world of transcription systems. Make sure to disclose the transcription system that you are going to use. These methodological reflections by Da Nascimento & Steinbruch (2019) offer some important insights why it is important to be transparent about your approach to transcription.

Coding unit, rules, and schemes (codebook)

This quick 10-step rundown might be helpful to get to you started with MaxQDA. See in particular the instructions on how to

- define your coding unit (what do you want to code: a sentence, a part of a sentence, or an entire paragraph, …),

- determine coding rules (when do you code and which code do you use?), and

- develop a coding scheme / codebook (short/extended code definition, criteria for when (not) to apply it, an anchor example).

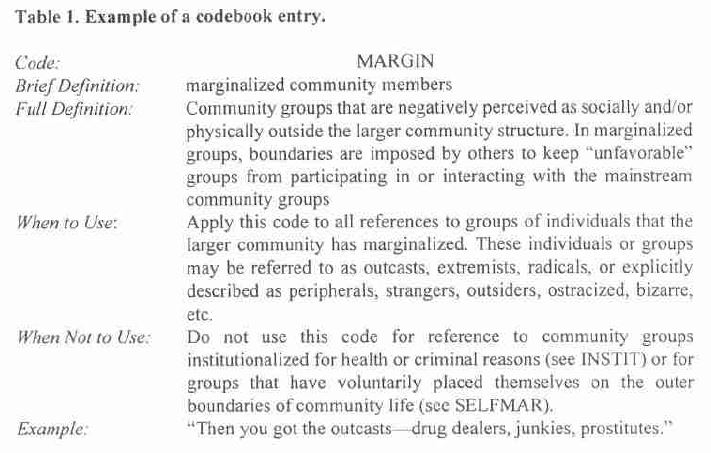

MacQueen et al. (1998) offer an example from their work:

Another example is the following coding scheme used by Berends & Johnston (2005):

An insightful and more detailed account of a collaborative and extensive codebook development can be found in the paper by DeCuir-Gunby, Marshall & McCullock (2011).

Thematic Matrix

A frequently used and helpful way to present results of a thematic analysis is to produce a thematic matrix. In a thematic matrix, you summarize your codings for the most relevant categories (=codes) for all cases. Once all cells are filled, you can then produce a code summary (what did you find in a specific category across all cases) and a case summary (what did you find in a specific case across all categories)?

A visual presentation of this idea is presented in Kuckartz (2014) below:

Intercoder/Intracoder Reliability

There is broad recognition that processes in qualitative research like identifying themes in interview transcripts are influenced by what unique perspective the researcher brings to the data. Beyond this broad recognition, there are divergent viewpoints about how this “subjectivity” should be openly utilized as an asset or controlled as a potential bias. A number of studies have looked at how different researchers identified themes in the same material (as an example see Armstrong et al., 1997). Approaches like “consensual coding” aim to overcome the binary tension by suggesting ways to productively engage with differences in how/what different researchers code by making those differences subject to deliberation with the aim to find convergence (as an example, see Hill et al., 1997).

In some approaches like content analysis in mass media research where a limited number of well-defined concepts are to be identified and coded in empirical data, the need to control for inter-coder reliability (or interrater reliability or also intercoder/interrater agreement) is an important concern. A way to do this is to calculate reliability using online tools like ReCal (see our blog post on this tool). Notably, it is also possible to control for reliability by calculating intra-rater or intra-coder reliability, i.e. by comparing the codings of a single researcher at a time point 1 with the repeated codings of the same material done by that same researcher at point 2.

Here are some readings if you want to dive deeper into the intricacies of reliability in qualitative research.

Potter, W. J., & Levine‐Donnerstein, D. (1999). Rethinking validity and reliability in content analysis. Journal of Applied Communication Research, 27(3), 258–284. https://doi.org/10.1080/00909889909365539

Lombard, M., Snyder-Duch, J., & Bracken, C. C. (2002). Content Analysis in Mass Communication: Assessment and Reporting of Intercoder Reliability. Human Communication Research, 28(4), 587–604. https://doi.org/10.1111/j.1468-2958.2002.tb00826.x

Krippendorff, K. (2004). Reliability in Content Analysis. Human Communication Research, 30(3), 411–433. https://doi.org/10.1111/j.1468-2958.2004.tb00738.x

Belur, J., Tompson, L., Thornton, A., & Simon, M. (2021). Interrater Reliability in Systematic Review Methodology: Exploring Variation in Coder Decision-Making. Sociological Methods & Research, 50(2), 837–865. https://doi.org/10.1177/0049124118799372

Study book

Udo Kuckartz has published an introductory book which is accessible through several university libraries on SAGE at https://methods.sagepub.com/book/qualitative-text-analysis

Kuckartz, U. (2014). Qualitative text analysis: A guide to methods, practice & using software. Sage Publications Ltd.